AI Voice Scams in 2026: Why Hearing A Familiar Voice Is No Longer Proof

Are you still treating a familiar voice as proof that someone is who they say they are?

And honestly, that feels justifiable... If you heard a voice and it sounded like your boss, your coworker, your child, or your bank, most people would naturally trust it more.

Now that shortcut is risky.

AI voice scams are getting more convincing, more scalable, and much harder to spot. AI voice scams now sit at the center of a new wave of social engineering attacks, and the old rules for trusting a phone call don’t apply anymore.

Regulators, law enforcement, and threat reports have warned that scammers can clone voices from short audio samples and use them to commit fraud, impersonation, and distress scams.

The result is simple: hearing a familiar voice is no longer enough to prove anything.

What Are AI Voice Scams?

AI voice scams are a form of fraud where attackers use artificial intelligence to clone or mimic a real person’s voice, then use that cloned voice to deceive, pressure, or manipulate a target.

That could mean a fake call from a “family member” in trouble, a voice message from a “boss” requesting an urgent payment, or a cloned voice used in a job interview as part of an identity fraud attempt. Experian has identified deepfake job candidates as a significant fraud risk in 2026.

In other words, the scam is no longer just about pretending to be someone else. It can now sound like them, too.

How AI Voice Scams Work

Most AI voice scams follow the same basic formula.

First, the attacker gets a voice sample. That sample might come from social media videos, podcasts, voicemails, interviews, conference recordings, or anywhere else a person’s voice is publicly available.

Next, the attacker uses that sample to generate speech that sounds close enough to the real person to slip past suspicion. Recent reports suggest some tools can now mimic a person’s voice from only a few seconds of audio, and lawmakers are starting to pressure voice-cloning companies to take abuse prevention more seriously.

In some cases, the voice is not doing all the heavy lifting either. Attackers may also spoof the caller ID so the number looks familiar, trusted, or at least less suspicious. That gives the scam one more layer of believability before the person even answers.

Next comes the social engineering part. The message is designed to feel believable and push the target into acting quickly, before they stop to verify the request.

So the scam is not just technical.

It is psychological.

Why Hearing A Familiar Voice Is No Longer Proof

This is the real problem.

As humans, we naturally attach trust to familiar voices. A known voice can provide comfort, confidence, urgency, and authority in seconds. That’s a very powerful shortcut, especially when the message sounds routine or emotionally charged.

But that kind of trust doesn’t hold up like it used to.

Europol has warned that AI-powered voice cloning and live deepfakes are amplifying fraud, extortion, and identity theft (p. 17).

So yes, a voice may still sound familiar. That doesn’t make it real.

Why AI Voice Scams Are More Dangerous Than Older Scam Calls

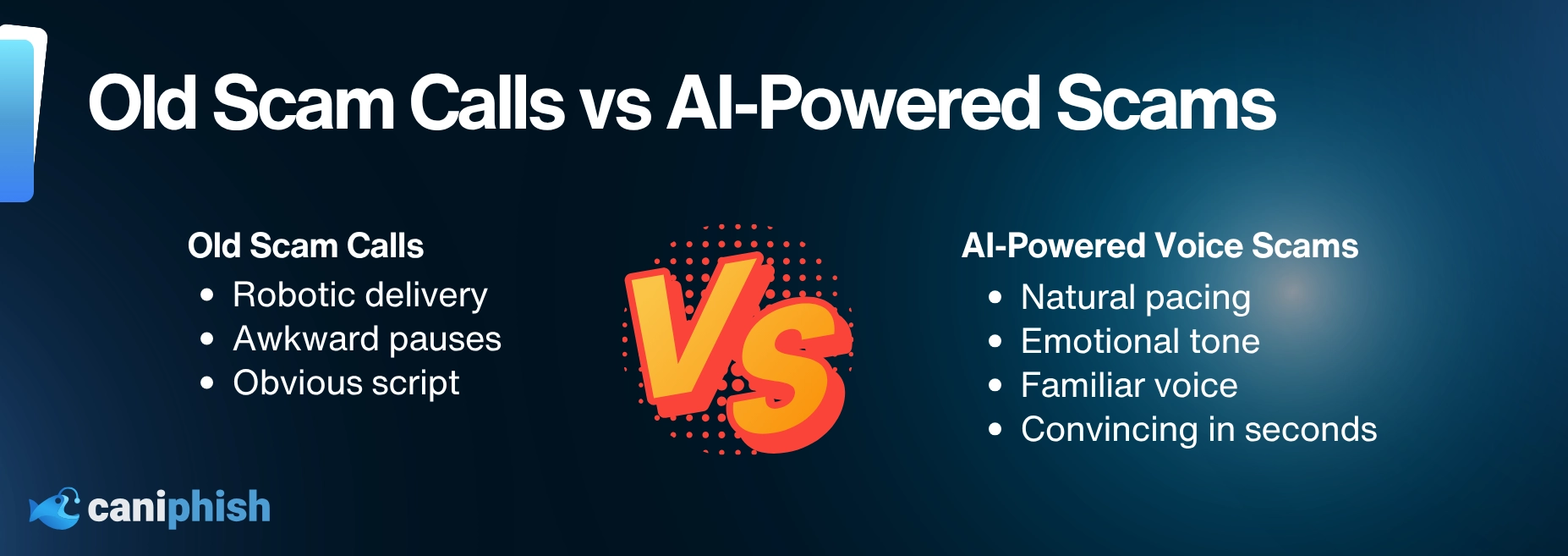

Older voice scams were often easier to spot.

The caller sounded off. The script was awkward. There was usually a pause before they started speaking. The whole thing felt like it had been written in a rush by someone who had never actually met a human being.

Well, AI changed all that. It is not just copying words. It can also imitate pitch, tone, pacing, and inflection.

Old scam calls vs AI voice scams:

- Old: robotic delivery, awkward pauses, obvious script.

- New: natural pacing, emotional tone, familiar voice, convincing in seconds.

It sounds cleaner, more convincing, and a lot less like the usual scammer nonsense. The timing is better. The delivery feels more natural. And if the voice sounds enough like the person the scammer is pretending to be, that can be enough to lower someone’s guard. It does not have to be flawless to work. It just has to feel real in the moment.

That is what makes modern voice scams more dangerous. They don’t have to be perfect. They just have to be convincing enough at the right moment.

Where AI Voice Scams Are Showing Up In 2026

AI voice scams aren’t limited to a single victim or a single type of setup. They are showing up in hiring processes, finance teams, homes, and executive workflows. Here are some of the most common ways attackers are using them in 2026.

Executive Impersonation

Executive impersonation is one of the highest-risk AI voice scam categories in 2026.

An attacker uses AI to clone or imitate a senior leader’s voice, then calls or sends a voice message to someone in admin or finance. The request usually sounds urgent and routine, which is a dangerous mix. It could be an immediate payment, an urgent change in bank details, or a request to share sensitive information. If the voice sounds plausible, employees may treat it like business as usual instead of stopping to verify it.

Family Emergency Scams

Also known as the "grandparent scam" or "virtual kidnapping" scam. This one pulls on the heartstrings or goes for full panic mode. The victim gets a fake call from a loved one in distress. This could be a partner, child, an immediate family member, or even a friend. The voice may claim that they have been kidnapped, arrested, have been in an accident, or simply that they are stuck somewhere and need urgent financial assistance. The goal is to trigger fear before the person has time to think clearly or verify what is happening. It is emotional manipulation with a cloned voice doing its dirty work.

Fake Job Interviews And Hiring Fraud

This one is especially nasty because it’s not just about stealing money. It can be about getting hired under a fake identity. Fraudsters can use deepfake audio tools during remote interviews to hide their true identities. If they get through the hiring process, they could gain access to the company’s system, internal data, or sensitive information. So, the scam doesn’t end when the interview does. That’s just the beginning of the problem.

Broader Deepfake Fraud

Not all scams fall neatly into one box. Some are part of a larger fraud attempt involving fake executives, vendors, recruiters, or a service provider. A cloned voice might be used alongside a phishing email, a backup payment scam, or to give a suspicious message the extra nudge it needs in the right direction. That’s exactly why voice cloning fraud is so dangerous. It’s becoming another tool that attackers can slot into all kinds of fraud.

Real World AI Voice Scam Example: The WPP CEO Deepfake

In 2024, scammers targeted advertising giant WPP using an AI-generated clone of CEO Mark Read’s voice. They also used his public image to create a fake WhatsApp account and set up a Microsoft Teams meeting that appeared to involve other senior leaders.

The goal was to trick a company executive into handing over money and personal details. WPP stopped the scam before it caused real damage, but the takeaway was ugly. If AI can fake a CEO’s voice well enough to make a call feel legitimate, then old assumptions about trust and verification start falling apart fast.

The WPP case is one of the clearest signals yet that voice-based verification is no longer safe for high-value decisions.

How Businesses Can Protect Themselves From AI Voice Scams

Businesses need to stop relying solely on voice when something important is at stake. That said, it doesn’t mean every phone call should be treated like a hostage negotiation.

The first step is simple: a familiar voice should never be the only thing approving a high-risk request.

When the request involves something important like money, payroll, bank changes, credentials, or access, the voice alone isn't enough. You need a second layer of checks.

How To Verify A Suspicious Voice Call

If a call feels off, or the request lands in that high-risk category, don’t let the voice do the convincing. Here’s what to do instead:

-

Pause the conversation

Don’t make decisions while you’re still on the call. Urgency is the scam’s best weapon. Take that away and most of it falls apart.

-

Hang up and call back on a trusted number

Use a number you already have on file, not one the caller gave you. If it’s a coworker, check your internal directory. If it’s a family member, use the number already saved in your phone.

-

Verify through a second channel

Send a message on a platform you know is real. A work chat tool, a known email address, or a video call all work. A cloned voice can fake a call. It has a much harder time faking a second channel at the same time.

-

Run it through your normal approval process

No matter how routine or urgent the request sounds, stick to the standard checks for payments, bank changes, or access requests. If the caller pushes back on any of that, that’s your answer.

Check out our blog which goes through the 10 techniques used to spot AI-generated audio.

A lot of these scams lean on the same tricks. Urgency. Secrecy. Strange payment requests. Pressure to act first and check later. If the call is trying to bulldoze its way past normal checks, that is not a glitch. That is the strategy.

Yes, that’s less convenient. Fraud is also inconvenient. Usually, in a much more expensive way.

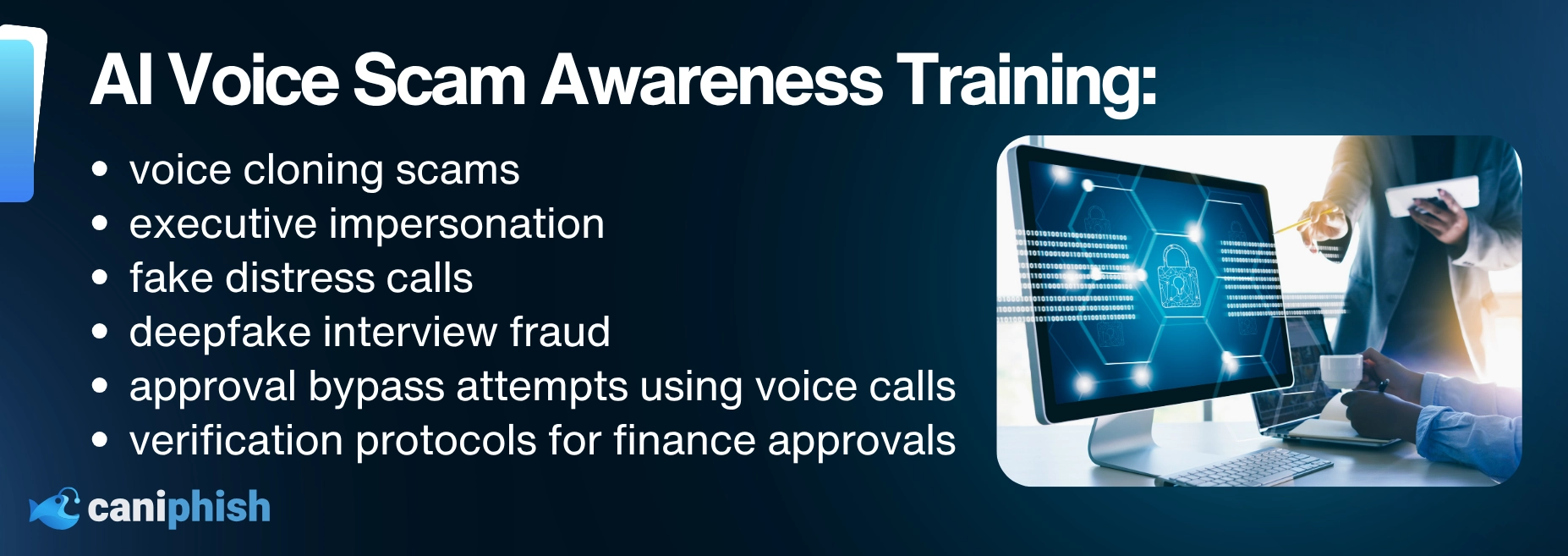

What To Include In AI Voice Scam Awareness Training

Businesses should also update awareness training to include:

- voice cloning scams

- executive impersonation

- fake distress calls

- deepfake interview fraud

- approval bypass attempts using voice notes or calls

- verification protocols for finance and payroll approvals

The Bottom Line on AI Voice Scams

There was a time when hearing a familiar voice meant something. Now it means far less than people think.

AI voice scams work because they exploit one of the oldest trust signals people have. If it sounds like someone you know, someone you work with, or someone you answer to, you are more likely to react before you verify.

That is exactly what attackers are counting on. The problem is not just that AI can fake a voice. It is that people still want to trust what they hear.

And in 2026, that is no longer a safe bet.

If your team hasn’t reviewed its voice-based approval process recently, now is the time.

Frequently Asked Questions

What should I do if I get a suspicious voice call?

If the call feels suspicious, stop there. Hang up, verify the request through a trusted contact method, and stick to your normal checks. The more the caller tries to control the pace, the more careful you should be.

Are AI voice scams being used against businesses?

Yes. They are being used in business email compromise, executive impersonation, hiring fraud, and broader deepfake-enabled fraud schemes.

How can you verify if a voice call is real?

Use a trusted phone number, a separate communication channel, or an existing internal approval process to verify the request. Do not rely on the voice alone. The FTC specifically recommends calling back using a number you know is real.

Why are AI voice scams so effective?

They work because they combine familiar voices with urgency, emotion, and pressure. That makes people more likely to act quickly before they stop to verify what is happening.

Should families use a codeword for emergencies?

Yes, and it’s one of the simplest defenses available. A family codeword or question can make it harder for a scammer using a cloned voice to bluff their way through a panic call. It is not foolproof, but it is a lot better than trusting the voice alone.

Can AI clone a voice from a short clip?

Yes. Several tools can now generate a usable voice clone from just a few seconds of audio, which is why publicly available recordings from podcasts, social media, or voicemail greetings are enough for attackers to work with.

The Top 13 AI Documentaries In 2026

Uncover the dark side of artificial intelligence, minus the Hollywood lasers.

Check out our top picksAn Operations Analyst on a mission to make the internet safer by helping people stay a step ahead of cyber threats.